The domain of large language model (LLM) quantization has garnered attention due to its potential to make powerful AI technologies more accessible, especially in environments where computational resources are scarce. By reducing the computational load required to run these models, quantization ensures that advanced AI can be employed in a wider array of practical scenarios without sacrificing performance.

Traditional large models require substantial resources, which bars their deployment in less equipped settings. Therefore, developing and refining quantization techniques, methods that compress models to require fewer computational resources without a significant loss in accuracy, is crucial.

Various tools and benchmarks are employed to evaluate the effectiveness of different quantization strategies on LLMs. These benchmarks span a broad spectrum, including general knowledge and reasoning tasks across various fields. They assess models in both zero-shot and few-shot scenarios, examining how well these quantized models perform under different types of cognitive and analytical tasks without extensive fine-tuning or with minimal example-based learning, respectively.

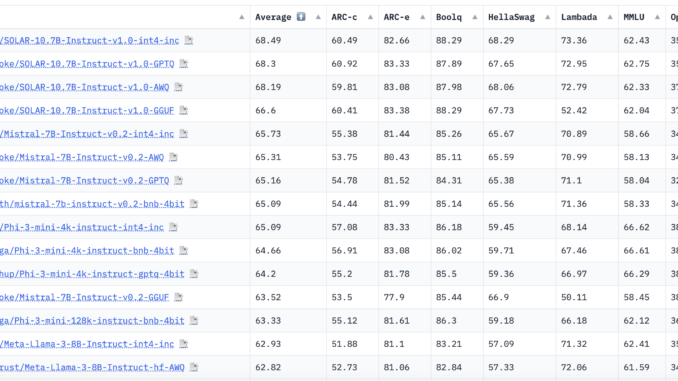

Researchers from Intel introduced the Low-bit Quantized Open LLM Leaderboard on Hugging Face. This leaderboard provides a platform for comparing the performance of various quantized models using a consistent and rigorous evaluation framework. Doing so allows researchers and developers to measure progress in the field more effectively and pinpoint which quantization methods yield the best balance between efficiency and effectiveness.

The method employed involves rigorous testing through the Eleuther AI-Language Model Evaluation Harness, which runs models through a battery of tasks designed to test various aspects of model performance. Tasks include understanding and generating human-like responses based on given prompts, problem-solving in academic subjects like mathematics and science, and discerning truths in complex question scenarios. The models are scored based on accuracy and the fidelity of their outputs compared to expected human responses.

Ten key benchmarks used for evaluating models on the Eleuther AI-Language Model Evaluation Harness:

AI2 Reasoning Challenge (0-shot): This set of grade-school science questions features a Challenge Set of 2,590 “hard” questions that both retrieval and co-occurrence methods typically fail to answer correctly.

AI2 Reasoning Easy (0-shot): This is a collection of easier grade-school science questions, with an Easy Set comprising 5,197 questions.

HellaSwag (0-shot): Tests commonsense inference, which is straightforward for humans (approximately 95% accuracy) but proves challenging for state-of-the-art (SOTA) models.

MMLU (0-shot): Evaluates a text model’s multitask accuracy across 57 diverse tasks, including elementary mathematics, US history, computer science, law, and more.

TruthfulQA (0-shot): Measures a model’s tendency to replicate online falsehoods. It is technically a 6-shot task because each example begins with six question-answer pairs.

Winogrande (0-shot): An adversarial commonsense reasoning challenge at scale, designed to be difficult for models to navigate.

PIQA (0-shot): Focuses on physical commonsense reasoning, evaluating models using a specific benchmark dataset.

Lambada_Openai (0-shot): A dataset assessing computational models’ text understanding capabilities through a word prediction task.

OpenBookQA (0-shot): A question-answering dataset that mimics open book exams to assess human-like understanding of various subjects.

BoolQ (0-shot): A question-answering task where each example consists of a brief passage followed by a binary yes/no question.

In conclusion, These benchmarks collectively test a wide range of reasoning skills and general knowledge in zero and few-shot settings. The results from the leaderboard show a diverse range of performance across different models and tasks. Models optimized for certain types of reasoning or specific knowledge areas sometimes struggle with other cognitive tasks, highlighting the trade-offs inherent in current quantization techniques. For instance, while some models may excel in narrative understanding, they may underperform in data-heavy areas like statistics or logical reasoning. These discrepancies are critical for guiding future model design and training approach improvements.

Sources:

Sana Hassan, a consulting intern at Marktechpost and dual-degree student at IIT Madras, is passionate about applying technology and AI to address real-world challenges. With a keen interest in solving practical problems, he brings a fresh perspective to the intersection of AI and real-life solutions.

Be the first to comment